A practical guide to structured interviews, the STAR format, and how AI dynamically adapts follow-up questions to surface what candidates are really made of.

Most hiring managers will give you a similar answer when asked how they know a candidate is a good fit. They interviewed well. There was positive energy. It just felt right.

The problem with that answer is that it does not predict job performance. It is predicting interview performance. And those two things are far less correlated than most hiring teams assume.

Research in organisational psychology has spent decades studying exactly this question: which interview approaches actually predict whether someone will succeed in a role? The findings are consistent, striking, and largely ignored by most companies still running conversations that feel more like a chat than a structured evaluation.

This blog breaks down the science of predictive interviewing, explains how the STAR format works and why, and shows how AI-powered interview platforms like easemyhiring.ai take the concept further by dynamically adapting follow-up questions in real time to probe deeper where it matters most.

Why do most interview questions not predict performance?

Before building a better interview process, it helps to understand why the typical one fails. The core issue is that most interview questions are designed to be answered well by someone who has prepared for them, not by someone who has actually done the thing the role requires.

Consider the classic opener: tell me about yourself. It rewards articulate self-presentation. It tells you almost nothing about whether someone can do the job. Or the equally common: where do you see yourself in five years? This question tests how well someone has internalised what interviewers want to hear. It does not test capability, resilience, judgement, or any other quality that actually determines job performance.

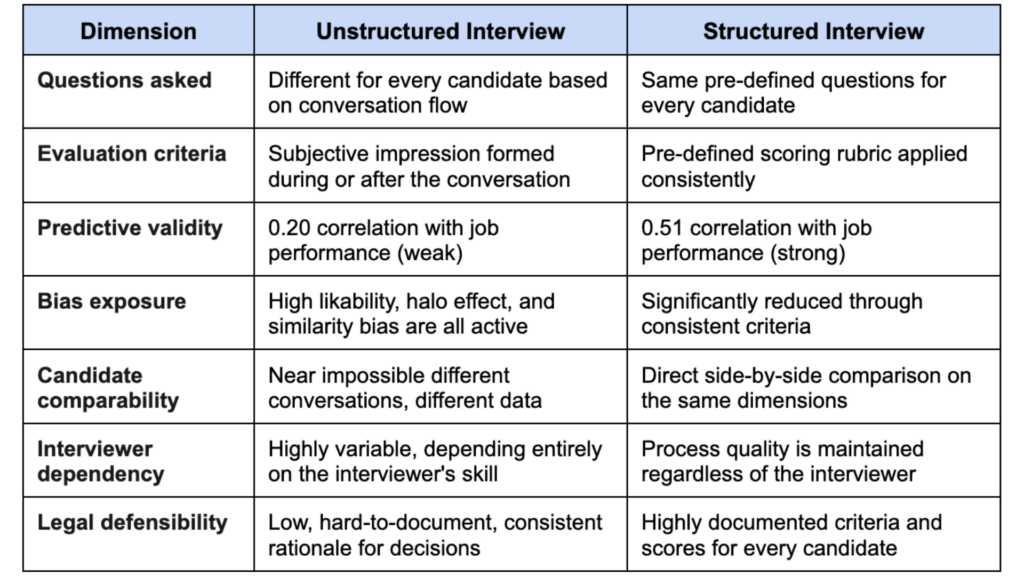

- 0.20 predictive validity of unstructured interviews for job performance. Essentially a weak predictor. (Schmidt & Hunter, 1998, still the benchmark study in IO psychology)

- 0.51 predictive validity of structured interviews. More than twice as predictive. (Schmidt & Hunter, same study, replicated multiple times since)

- 38% of hiring decisions influenced by factors irrelevant to job performance, including likeability, appearance, and similarity to the interviewer. (Harvard Business Review)

The gap between 0.20 and 0.51 is enormous in practical terms. It is the difference between a process that is barely better than chance and one that provides you a genuine, statistically meaningful signal about future performance. The entire science of structured interviewing exists to close that gap.

The goal of a successful interview question is not to give the candidate a chance to impress you. Its goal is to give you a reliable signal about their ability to do the specific job you’re hiring for.

Structured vs unstructured interviews: The full comparison

Predictive validity figures from Schmidt & Hunter (1998), Journal of Applied Psychology. Bias data from Harvard Business Review research on unconscious bias in hiring.

The evidence is unambiguous. Structured interviews significantly predict job performance, are less susceptible to bias, and are more defensible in the event of a hiring decision challenge. Yet most organisations still rely primarily on unstructured conversations because they feel more natural, more human, and less like an assessment.

That comfort is costing them hiring quality. The antidote is not to make interviews feel robotic. The goal is to build a structured framework that asks better questions and applies consistent scoring while still allowing genuine human conversation within it.

What makes an interview question actually predictive?

Not all structured questions are created equal. The researcher distinguishes between several types of interview questions based on how well they predict actual job behaviours.

Behavioural questions: The gold standard

Behavioural interview questions are grounded in a simple and well-validated principle: past behaviour is the best available predictor of future behaviour. When you ask a candidate to describe a specific situation they have actually been in, the story they tell reveals far more than any hypothetical answer could.

A well-crafted behavioural question asks the candidate to recall and describe a real experience. Tell me about a time when you had to make a difficult decision with incomplete information. Walk me through a situation where you had to rebuild trust with a stakeholder after something went wrong. You cannot answer these questions with a generic script because they require drawing from experience.

Situational questions: Testing judgment

Situational questions present a hypothetical scenario relevant to the role and ask the candidate what they would do. What would you do if a key client escalated a complaint to your CEO before you had a chance to resolve it yourself? These questions assess judgement and decision-making frameworks rather than experience, rendering them especially beneficial for candidates who are relatively new to a field and possess fewer concrete examples to reference.

The research shows situational questions have slightly lower predictive validity than behavioural ones for experienced candidates, but they are valuable for roles where practical experience is less important than reasoning ability and values alignment.

Questions to avoid entirely

Brain teasers and puzzle questions have near-zero predictive validity for job performance and primarily measure confidence under pressure. Leading questions that signal the desired answer produce socially desirable responses, not truthful ones. Personal questions about family, health, or plans are often legally problematic and entirely irrelevant to performance. Hypothetical character questions like, ‘if you were an animal, what would you be?’ measure nothing useful and make your company look amateurish to strong candidates.

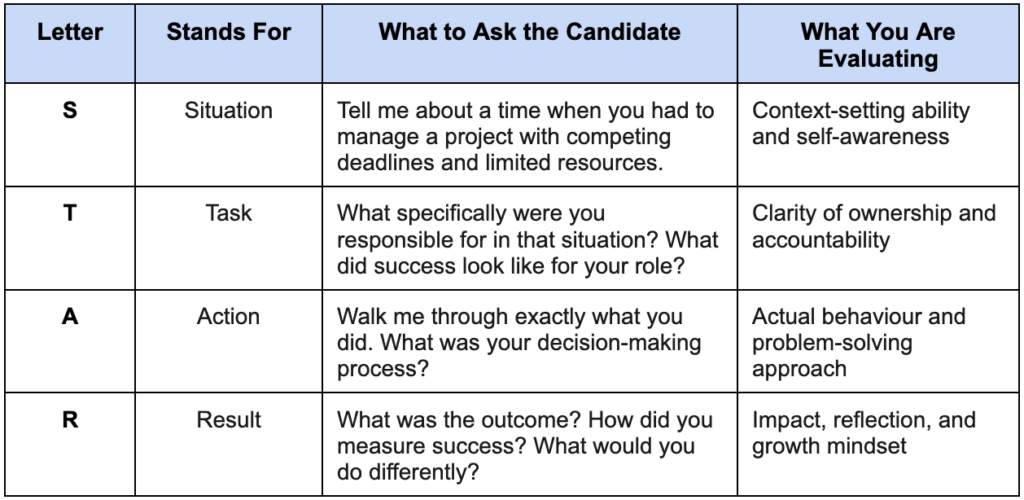

The STAR format: How to structure questions and evaluate answers

The STAR framework is the most widely used and research-backed structure for both asking behavioural interview questions and evaluating the answers you receive. Understanding it properly changes how you design your entire question set.

STAR stands for Situation, Task, Action, and Result. The framework works in two directions. It guides how you ask follow-up probes to obtain a complete answer, and it provides the structure against which you evaluate the completeness and quality of what a candidate tells you.

The most common mistake interviewers make with STAR is accepting a partial answer and scoring it as complete. A candidate who describes the situation and the result without ever clearly explaining the specific actions they personally took has given you half an answer. You have learned what happened to them. You have not learned what they did.

This is where structured probing matters enormously. When a candidate skips straight to the result without explaining their decision-making, the interviewer needs to bring them back. What specifically did you do? What options did you consider before choosing that approach? What was your personal contribution, as distinct from what the team did?

These probes are not peripheral. They are the moments when you get the real answer. And they require an interviewer who is trained to notice when a STAR response is incomplete and skilled enough to redirect without making the candidate feel interrogated.

A complete STAR answer is evidence. An incomplete STAR answer is a story. Your job as an interviewer is to know the difference and keep probing until you have evidence.

How to write interview questions for specific competencies?

The most effective interview question banks are built backwards from the competencies the role actually requires, not forwards from a generic list of interview questions found online. The process is straightforward once you understand the logic.

First, identify the three to five competencies that most strongly predict success in the specific role. For a sales role, the key competencies might be resilience under rejection, persuasion through objection handling, and pipeline discipline. For a product manager, it might be cross-functional influence, prioritisation under uncertainty, and stakeholder communication. For a finance analyst, it might mean attention to detail, analytical rigour, and integrity under time pressure.

Second, write one to two behavioural questions for each competency that would be impossible to answer well without genuine relevant experience. The question should require the candidate to recall a specific situation that actually happened, not construct a hypothetical one.

Third, build a scoring rubric for each question before interviews begin. What does a strong answer look like? What does a weak one look like? What signals are you specifically listening for? When this rubric exists before the interview starts, scoring becomes objective. Without it, scoring is just another form of gut feel with a number attached.

Example: writing questions for the competency of resilience

A poorly written question for resilience: ‘How do you handle failure?’ This is too broad, too easy to prepare for, and invites a generic answer about learning from mistakes that every prepared candidate will give.

A well-written behavioural question for resilience is: ‘Tell me about the most significant professional setback you have experienced in the last two years.’ What specifically happened, what was your immediate reaction, and how did you work through it?’ This requires real experience. It probes the emotional reality of the setback, not just the tidy retrospective lesson. And it is very difficult to fake convincingly without having actually been through something difficult.

Where do human interviewers consistently fall short?

Even the best-designed interview question bank is only as effective as the consistency with which it is applied. And this area is precisely where human-led structured interviews have a persistent, well-documented weakness.

Interviewers drift from the script. They follow a thread that interests them and abandon questions that might have been more diagnostic. They probe more deeply with candidates they like and let weak answers slide from candidates who have made a strong first impression. They forget to ask follow-up questions when the primary answer is incomplete. They score answers differently depending on who they have interviewed before the candidate in question.

None of this reflects malicious intent. It reflects how human attention and cognition work under the pressure of a real-time conversation. You cannot fully listen, evaluate, probe, score, and manage the interpersonal dynamic of an interview simultaneously without something slipping.

This is the structural limitation that AI-powered interviewing addresses. Not by removing the human element from hiring, but by ensuring that the question-asking, the probing, and the evaluation layer operate with complete consistency for every candidate, regardless of volume, fatigue, or interviewer variability.

How does AI dynamically adapt follow-up questions in real time?

This is where the conversation moves from structured interviewing principles to what AI actually enables, which human interviewers cannot reliably deliver at scale.

When easemyhiring.ai conducts a first-round interview, it does not simply read a list of pre-set questions and move on regardless of how the candidate responds. It analyses the candidate’s response in real time and adapts its follow-up questions based on what it hears. This is dynamic probing, and it is the capability that most closely mirrors what a skilled human interviewer does in their best moments, applied consistently to every candidate every time.

The system is built to detect specific response patterns that signal an incomplete or insufficiently evidenced answer and trigger targeted follow-up questions designed to surface what is actually there underneath the surface response.

What makes this capability significant is not just the sophistication of the follow-up questions themselves. It is the consistency with which they are applied. A human interviewer might notice that a candidate’s answer was vague and probe effectively with candidate one and candidate twenty. By candidate 40, after a full day of interviews, the same signal might slip past unnoticed. The follow-up is not being requested. The weak answer is scored as adequate. A candidate who should have been eliminated advanced.

Easemyhiring.ai applies the same probing framework to candidate one and candidate four hundred. The quality of evaluation does not degrade with volume. The follow-up questions that reveal genuine competency are always asked, and the performance report reflects the full depth of what each candidate actually demonstrated, not what an interviewer remembered at the end of a long day.

Dynamic AI follow-up questioning does not replace the skilled human interviewer. It ensures that the skill level of the best interview you have ever run is the baseline for every single interview you conduct.

Putting it all together: The predictive interview framework

Creating an interview process that accurately predicts job performance involves integrating the appropriate principles in the correct order. Here is what that looks like in practice.

Start by identifying the three to five competencies that most strongly predict success in the specific role. These should be grounded in data about your top performers, not assumptions about what excellence looks like. Then build one to two behavioural questions per competency, written to require genuine experience and specific examples. Build a scoring rubric for each question before the first interview is conducted.

In the first round, use AI-powered structured interviews through Easemyhiring.ai to conduct consistent, probed evaluations of every candidate. Every applicant receives the same questions, the same follow-up depth, and the same evaluation criteria applied to their responses. Your hiring managers receive objective, comparable data on the entire candidate pool through instant performance reports, even before booking a single human interview slot.

In the last stages, human interviewers concentrate on the skills that need personal assessment, like fitting in with the company culture, showing leadership, interacting with others, and the topics that the AI performance reports have highlighted for more profound discussion. The human conversation is richer and more targeted because the AI has already done the foundational evaluation work.

The result is a process where every hiring decision is built on evidence, not impression. Where the question is not ‘Did they interview well?’ but ‘ Do they have the competencies this role requires?’ And where the answer comes from a structured, probed, consistently applied evaluation rather than how the conversation felt on the day.

The bottom line

Most interviews fail to predict job performance because they were not designed to. They were designed to be comfortable, to feel like a conversation, and to allow the interviewer to form an impression. None of those things is correlated with whether someone will succeed in the role.

Writing interview questions that actually predict performance requires understanding what you are measuring, building questions that require real evidence to answer, applying a consistent scoring framework, and probing deeply enough to get past the prepared surface and reach the experience underneath.

AI-powered interview platforms like easemyhiring.ai apply these principles at scale, achieving a level of consistency that no human team can match in volume, and they provide dynamic follow-up probing that reveals the depth of evidence your hiring decisions should be based on.

Better questions. Deeper probing. Consistent evaluation. That is what a hiring process that actually predicts performance looks like.

Stop interviewing on instinct. Start hiring based on evidence.

If your interview questions are not built around specific competencies, backed by a scoring rubric, and probed consistently for every candidate, your hiring decisions are more influenced by first impressions than by performance data. easemyhiring.ai changes that from the first interview.

- Structured, role-specific AI interviews with competency-based questions for every candidate. No drift from the script. No skipped follow-ups. No fatigue-affected scoring.

- Dynamic follow-up probing that adapts to each candidate's response in real time. Every vague answer is challenged. Every incomplete STAR response is completed.

- Instant performance reports built on objective, consistent evaluation criteria. Your hiring managers receive data, not impressions, before a single final-round interview is scheduled.

- Bias-reduced first-round evaluation at any scale. The same rigour applied to Candidate 1 and Candidate 500.

- Human interviewers are freed to focus on what only humans can assess. Culture, leadership, and the judgement calls that genuinely require a person in the room.

Book your free consultation at easemyhiring.ai and create an interview process that supports your hiring decisions.

The best candidates deserve a process that evaluates them fairly. Your company deserves a process that actually finds them.